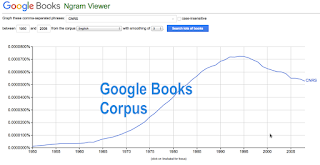

The most visible of all the available large corpora out there is from the Google Books project. There are several ways to interact with the data drawn from the several million books scanned by Google:

Google N-Grams Viewer:

http://books.google.com/ngrams/

This is the classic interface designed by Google which allows users to plot single words and short phrases over time in a large subset (~5 million books) of the corpus. In addition it provides searches in selected sets of curated works in the categories "American English," "British English," "English," "Chinese (simplified)," "English Fiction," "French," "German," "Hebrew," "Russian," and "Spanish."

Google N-Grams Viewer:

http://books.google.com/ngrams/

This is the classic interface designed by Google which allows users to plot single words and short phrases over time in a large subset (~5 million books) of the corpus. In addition it provides searches in selected sets of curated works in the categories "American English," "British English," "English," "Chinese (simplified)," "English Fiction," "French," "German," "Hebrew," "Russian," and "Spanish."

Cultoromics Bookworm Viewer:

This is in many ways perhaps the best interface tool for queries in the Google Books corpus. Developed by the Culturomics folks at Harvard it limits itself to only those digitized texts which have information about them (Full title, Publication Date, Publication Place, etc.) on OpenLibrary.org. As a resuly users can run queries in highly selective corpora based on subject (books on world history, American books on science, etc.) though these corpora are much smaller than those in the full Google Books collection.

BYU Google Books Viewer:

BYU Google Books Viewer:

This interface is the only of the above that allows users to search longer strings of words from the corpus. It also shows links to the books in which words appear by year (note however that this initiates a new search in Google Books which may not neccesarily match the original data used in graphing).. It offers the same corpora as available in N-Grams including American works (155 billion words) British works (34 billion words) Fiction (91 billion words) Spanish works (45 billion words), and a 1,000,000 book sample (89 billion words).

No comments:

Post a Comment